News

- February 25, 2015:

- UPDATE: Evolve & Conquer: Teaching evolution via an engaging video game

- Computing a football season

- Arend’s Job Description – The Big Question

- Intelligence With Representations

December 20, 2013:

June 27, 2013:

May 16, 2013:

Search

at Michigan State University

Research

Research in the Adami lab generally falls into three main categories:

Evolution of behavior and intelligence. We embed agents in a simulated environment and observe how evolution shapes the agent’s behavior over long time periods. With these projects, we often aim to discover the conditions that evolve agents that are capable of dynamic, predictive behavior.

Evolutionary processes. Using simplified model systems such as Avida and the NK landscape, we study how evolution shapes populations over long time periods.

Applications of evolution. In these projects, we use evolution as a tool to design classifier systems, predictive machines, and various other engineering tools that are useful in our everyday life.

As you can see, the majority of the research in the Adami lab is focuses on evolution in one form or another. Below are a few of the many research projects currently ongoing in the lab.

Evolution of behavior and intelligence

Markov Network Brains

A large part of our research efforts focus on the development and application of Markov Network Brains (MNBs). Many of the projects below use MNBs as a core component of the project. MNBs have applications in Artificial Intelligence, robotics, classification, evolution of animal behavior, and much more. Visit the MNB user page for more information about MNBs.

How and why does swarming behavior evolve?

In our Evolution of Swarming (EOS) platform, we study the basic biological mechanisms that give rise to swarming behaviors of animals in the air, on land, and in the sea. The following questions interest us in this project:

- What selective pressures are conducive to the evolution of swarming behaviors?

- What effect does predation have on swarming behaviors?

- Do swarming behaviors have an effect on predators that hunt swarming animals?

Recently, we discovered that predator confusion can provide a sufficient selection pressure to evolve swarming behavior. Below is a video of one of the emergent swarms that evolve when we implemented predator confusion as an indirect selection pressure. The swarm agents are white dots and the predator is the red dot.

Researchers: Randal S. Olson and Dr. Arend Hintze

Contact: olsonran@nullmsu.edu and hintze@nullmsu.edu

Evolutionary game theory

We use evolutionary game theory (EGT) as a simplified model system to study the viability and stability of behavior on evolutionary scales. These projects often help us gain a deeper understanding of how evolution shapes behavior without adding too many complicating factors.

The following questions in EGT are of interest to us:

- What conditions promote the evolution of cooperation?

- What conditions sustain cooperation, and what conditions cause cooperators to defect?

- What effect does population size and population structure have on the evolution of behavior?

Researchers: Jory Schossau and Dr. Arend Hintze

Contact: jory@nullmsu.edu and hintze@nullmsu.edu

Evolutionary processes

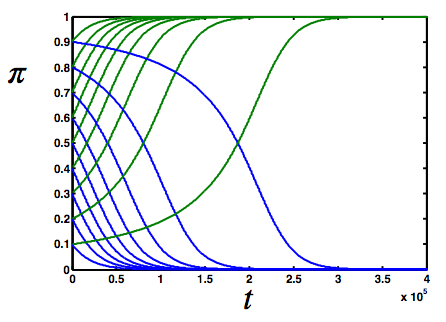

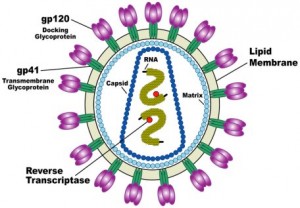

HIV quasispecies evolution and emergence of drug resistance

High mutability of HIV results in a quasispecies-like virus population in the host. Such a high genomic diversity allows drug-escape mutations to amass over time. Low frequency genomic variants of the virus are particularly linked to treatment failures. Since emergence of drug resistance is one of the biggest challenges in treating HIV-infection, we want to understand the following:

1. What is the structure of the viral quasispecies, i.e. the number of genomic variants and their abundances?

2. How do the quasispecies evolve in presence of drug-treatments?

Researchers: Dr. Aditi Gupta and Dr. Yong-Hui Zheng

Contact: agupta@nullmsu.edu

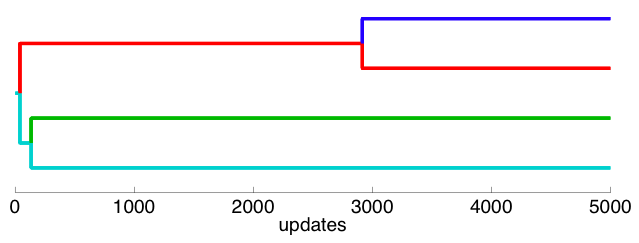

Asexual speciation

Phylogenetic tree of one population splitting into four distinct lineages that have different resource utilization patterns and coexist indefinitely as negative frequency-dependent selection prevents extinctions.

Using an agent-based simulation we study the dynamics of speciation in asexual organisms. In a model with no spatial structure speciation (by Van Valen’s 1976 definition) is observed. Organisms evolve to specialize on limiting resourses and coexist because of the emerging negative frequency-dependent selection. The number of species depends on the number of resources in the environment, and is modulated by the abundance of each of those resources. Additionally, without tradeoffs between the ability to utilize several resources speciation does not occur, highlighting the significance of constraints on resource use.

Researcher: Dr. Bjørn Østman

Contact: ostman@nullmsu.edu

Evolution of Shannon’s information content

In this project, we track the evolution of multiple traits of digital organisms such as information content within the Avida system to see how information emerges and is maintained in the genomes of organisms. To quantify the selective pressures on information and other traits, and to show that information is strongly selected for, while other traits (such as genome length) are only weakly selected, we use the Price equation as a precise linear estimation of traits variation in an evolving population.

Researcher: Masoud Mirmomeni

Contact: mirmomen@nullcse.msu.edu

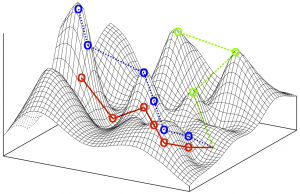

Phenotypic plasticity in rugged fitness landscapes

In this project, we study the effects of phenotypic plasticity in simulated rugged fitness landscapes such as the NK landscape and Avida. We seek the answer the following questions:

- What biological mechanisms give rise to plastic phenotypes?

- How does phenotypic plasticity affect the rate of evolution?

- How does phenotypic plasticity affect the evolutionary trajectory of the population?

- Does phenotypic plasticity enable the population to avoid converging on local maxima?

- Does phenotypic plasticity allow for the population to perform more fitness valley crossings?

Researcher: Randal S. Olson

Contact: olsonran@nullmsu.edu

Applications of evolution

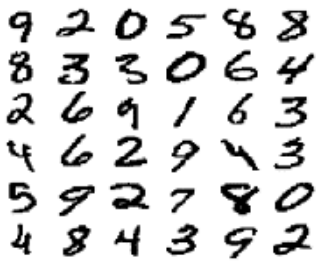

Darwin vs. DARPA

The highly publicized DARPA project “SyNAPSE” aims at developing neuromorphic machine technology that would ultimately support artificial intelligence, for a project cost to date of $42 million. The design is based on fairly standard neural network technology rendered in hardware, and appears to be difficult to program, with only limited learning capacity. Rather than demonstrate the scalability of a neuromorphic computation paradigm, many expect that this project will instead demonstrate that human design of controllers for complex tasks is impossible.

We propose instead to evolve a controller with similar specifications as DARPA’s neuromorphic chip, but using a novel computational framework (stochastic Markov networks) where the connectivity of computational nodes and learning behavior of each gate is evolved with a Genetic Algorithm. We intend to match the performance of DARPA’s chip (recognition of single numerals and playing the computer game “Pong”) within the first 6 months of the project, then move on to successfully evolve a controller for a scaled-down version of the game “Tetris”, using Markov brains where each gate is run on a single core of a GPU.

Achieving this project has ramifications that may be felt across the entire AI community, as it would show that evolution beats design, even when the design is based on modern biological principles, and has substantial institutional (DARPA and IBM) as well as financial support behind it. Furthermore, evolution beats design for a fraction of the cost.

Researchers: Sam Chapman and Dr. David Knoester

Contact: chapm236@nullmsu.edu and dk@nullmsu.edu

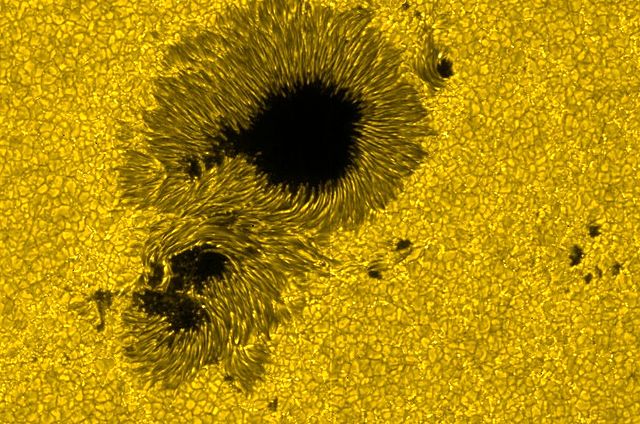

Sunspot number forecasting with evolved prediction systems

In this project, we use Markov networks to design an accurate alarm system to avoid space weather hazards for modern technologies by predicting solar and geomagnetic activity indices. By estimating the prediction horizon, we evolve a Markov network that can accurately predict these solar and geomagnetic indices to the prediction horizon. Knowing the values of these solar and geomagnetic measures help us forecast the upcoming geomagnetic anomalies-storms to avoid space weather hazards.

Researcher: Masoud Mirmomeni and and Dr. David Knoester

Contact: mirmomen@nullcse.msu.edu and dk@nullmsu.edu

Applications of evolutionary algorithms in robotics

This project studies how the evolutionary algorithm can be applied to robotics through the use of Markov network brains. By implementing the evolved Markov brains in embedded systems, the capabilities of how well a simulated brain jumps the simulation/reality gap can be studied, therefore providing means for real world applications in the field of machine learning in robotics. This jump to the “real world” brings up some questions:

- Is there a template for creating/intertwining worlds and fitness functions?

- What methods are there to manifest continuous motion out of discrete output?

- Will evolved, Markov-based, feedback learning enable machines to generate adaptive models of the real world?

Researcher: Dr. Arend Hintze

Contact: hintze@nullmsu.edu